LRMS DIET Management

Overview of LRMS (Local Ressource Management System)

Parallel resources are generally accessible through reservation systems, also called batch systems. To execute their jobs, clients have to submit via special mnemonics and chosen options a script that contains the command line that launches the job. Options are not necessarily given in the submission line, but can also appear in the script with a correct syntax. Several batch systems exist. Among them, one can cite Loadleveler on IBM resources, OpenPBS or Torque , which are forks of the well-know PBS system, and OAR developped by IMAG at Grenoble, and used in the Grid’5000 Project of a research grid. Most of the submitted jobs are parallel jobs, coded from the MPI standard with an instantiation like MPICH or LAM.

In order to correctly use a batch system, a client must provide in the submission line or in the script several information like: the number of machines to assign, the duration that they will be used, the number of MPI processes to use (indeed, most applications still use MPI-1.2 implementations where the number of processes is statically defined at the spawning of the jobs).

Problematic

The Grid will only be used if its resources can be made easily available to clients. Grid middlewares are a good means to propose this transparent access, but few of them have the possibility to submit transparently for the user to batch systems, i.e., with the same manner than with sequential jobs: only the service and its input parameters are provided by clients. Information like the walltime, the number of machines and processes, must be determined by the middleware when choosing the parallel resources on which to submit in its scheduling phase.

Interfacing DIET with LRMS

We have firsly considered Elagi. This library allows to remotely submit on batch systems. But in our case, a DIET server (SeD) is deployed on each computing resource. So, it is locally present with the batch system. Then, most of the possibilities of Elagi are not used.

We are actually extending the DIET server API such that any parallel application can be called from a SeD without further client information.

Performance prediction and scheduling algorithms

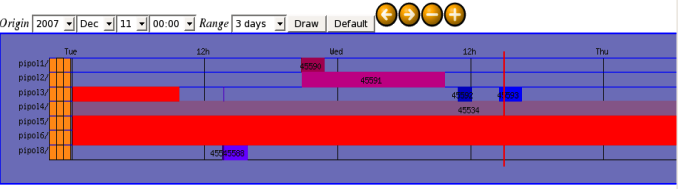

Simbatch is a module of the grid simulator Simgrid. It has been designed and developed to model batch systems in order to test realisticly distributed scheduling algorithms, and provide good performance prediction functions that can be embedded in the SeD.